Unoptimized Code

There are quite a few resources available on how to write MDX, so I won’t be going into that here. The point I want to make is that there are ways you can identify queries running on your SSAS instance that may benefit from optimization, even without actually knowing MDX. In this section I’ll mention a handful of key performance counters that will allow you to identify issues related to query optimization. You can then take this knowledge to the developers of the queries with useful feedback as to how to improve performance.

Monitoring MDX

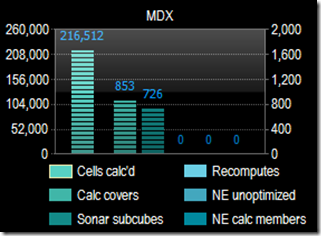

Specific to MDX, there are a few performance counters that can provide a lot of insight. I’ll actually group them into three general issues. The first group are:

· MDX: Total cells calculated

· MDX: Number of calculation covers

· MDX: Total Sonar subcubes

A high number for any of these metrics while a query is executing suggests the query is using cell by cell calculations instead of a block-oriented, also known as “subspace”, evaluation. So what does that mean? To stick with our SQL example from before, you can relate cell by cell calculations to using cursors in SQL. This is often an inefficient and resource intensive way of processing data. Maybe you’ve heard of the term ”RBAR” in SQL development, which stands for “Row By Agonizing Row”. Well you could call this CBAC, or “Cell By Agonizing Cell”.

The MDX performance whitepaper I mentioned in the first post in this series goes into great detail about this. There have been many enhancements to block-oriented evaluation mode since then and updated details can be found in Performance Improvements for MDX in SQL Server 2008 Analysis Services.

Another counter that can indicate serious performance issues with MDX:

· MDX: Total recomputes

This indicates errors in calculations during query execution. A non-zero value here can indicate issues where unnecessary recalculations are taking place and can even lead to a fall back to cell by cell mode in an effort to eliminate the error.

Jeffrey Wang has a great blog post that walks though some examples of where recomputes can have a dramatic impact on performance.

One more set of counters that I think gives us a good look into MDX performance:

· MDX: Total NON EMPTY unoptimized

· MDX: Total NON EMPTY for calculated members

In a nutshell, SSAS data tends to be sparsely populated data. Take data on sales orders for an example. A typical sales order is not likely to include at least one of every item available for purchase. There are likely to be a lot of empty cells for items not purchased for any given order.

You’ll remember earlier I mentioned the performance impact of cell by cell calculations. When you have sparse data, specifying a NON EMPTY algorithm can also speed up performance, but there is actually more than one code path for this algorithm. A slower code path can actually cause performance degradation. This behavior has been improved in SSAS 2008, but can still occur. The above counters can help you identify when that’s the case. More details can be found in the NON_EMPTY_BEHAVIOR section of the Analysis Services MOLAP Performance Guide for SQL Server 2012 and 2014.

Improving MDX Performance

So to wrap up this edition of the series, what are some primary strategies for optimizing MDX performance? First, proper aggregations and partitions can make a big difference, especially if the bottleneck is in the storage engine. There is tons of information on aggregation strategy that I won’t repeat here, but to reuse our analogy, aggregation strategy is similar to SQL index strategy. There is a sweet spot. Too many aggregations can be just as bad as too few. Aggregations provide pre-calculated data that can improve query performance. However, aggregations must be maintained and too many will increase the resource requirements during processing as well as storage requirements for all this extra data.

If the bottleneck appears to be in the formula engine, watch for cell by cell calculation, and eliminate empty cells and tuples using the recommended strategies in the linked whitepapers mentioned above.

So at this point you might be wondering, aside from the counters we just mentioned, how do we know where the main bottle neck might be, formula engine or storage engine? Where should we focus our performance troubleshooting? I’ll help you further pinpoint those efforts in the next post.

The blog and data is excellent and informative as well auto repair blogs forums

ReplyDeleteI think this is a standout amongst the most critical data for me. What"s more, i"m happy perusing your article. Be that as it may, ought to comment on some broad things

ReplyDeleteoptions trading example

Great survey. I'm sure you're getting a great response.

ReplyDelete24 hour locksmith las vegas

I am always searching online for articles that can help me. There is obviously a lot to know about this. I think you made some good points in Features also. Keep working, great job

ReplyDeleteacesss.org

All the contents you mentioned in post is too good and can be very useful. I will keep it in mind, thanks for sharing the information keep updating, looking forward for more posts.Thanks

ReplyDeletereal instagram likes free

The material and aggregation is excellent and telltale as comfortably. Wedding journal ideas

ReplyDeleteThis is a fabulous post I seen because of offer it. It is really what I expected to see trust in future you will continue in sharing such a mind boggling post

ReplyDeletebuy real instagram followers cheap

I don’t think many of websites provide this type of information. mechanic film

ReplyDeleteThe writer is enthusiastic about purchasing wooden furniture on the web and his exploration about best wooden furniture has brought about the arrangement of this article. beautiful uncertainty pdf

ReplyDeleteI think this is an informative post and it is very useful and knowledgeable. therefore, I would like to thank you for the efforts you have made in writing this article. earn money online by playing games without investment

ReplyDeleteGreat Information sharing .. I am very happy to read this article .. thanks for giving us go through info.Fantastic nice. I appreciate this post. difference between hcg drops and shots

ReplyDeleteThis is such a great resource that you are providing and you give it away for free.

ReplyDeleteelder automotive group

I’ve been searching for some decent stuff on the subject and haven't had any luck up until this point, You just got a new biggest fan!..

ReplyDeletenaturally decaffeinated tea

Nice blog and absolutely outstanding. You can do something much better but i still say this perfect.Keep trying for the best.

ReplyDeletewedding favor ideas

Great post I would like to thank you for the efforts you have made in writing this interesting and knowledgeable article.

ReplyDeleteearn money by playing games on android

This is a fantastic website and I can not recommend you guys enough.

ReplyDeleteelectric car brands

Your content is nothing short of brilliant in many ways. I think this is engaging and eye-opening material. Thank you so much for caring about your content and your readers.

ReplyDeletehypno band does it work

I’ve read some good stuff here. Definitely worth bookmarking for revisiting. I surprise how much effort you put to create such a great informative website.

ReplyDeleteearn money by playing games on android

This is a great article thanks for sharing this informative information. I will visit your blog regularly for some latest post. I will visit your blog regularly for Some latest post.

ReplyDeletedavika hoorne biography

That is very helpful for increasing my knowledge in this field.

ReplyDeletemobile mechanic business

I would also motivate just about every person to save this web page for any favorite assistance to assist posted the appearance.

ReplyDeleteconfinement food delivery singapore

Thank you so much for ding the impressive job here, everyone will surely like your post.

ReplyDeletespecial gaming features

I will be interested in more similar topics. i see you got really very useful topics , i will be always checking your blog thanks

ReplyDeletewhatsapp servers download

Superbly written article, if only all bloggers offered the same content as you, the internet would be a far better place..

ReplyDeleteinterracial dating definition

Thanks for the best blog. it was very useful for me.keep sharing such ideas in the future as well.

ReplyDeletemechanic shops

I don’t think many of websites provide this type of information.

ReplyDeletehow to lose weight fast with exercise

Just pure brilliance from you here. I have never expected something less than this from you and you have not disappointed me at all. I suppose you will keep the quality work going on.

ReplyDeletefeatures of electric scooter

I think this is an informative post and it is very useful and knowledgeable. therefore, I would like to thank you for the efforts you have made in writing this article.

ReplyDeletetwitter follow button

Great Information sharing .. I am very happy to read this article .. thanks for giving us go through info.Fantastic nice. I appreciate this post.

ReplyDeletewedding photographer salary

What can you tell me about http://locksmith-elizabeth-nj.com/ this locksmith device?

ReplyDeleteDid you use it before? Please tell me. Your professional thoughts are very important for my security business.

I think this is an informative post and it is very useful and knowledgeable. therefore, I would like to thank you for the efforts you have made in writing this article. upload to instagram from mac

ReplyDeleteHi to everybody, here everyone is sharing such knowledge, so it’s fastidious to see this site, and I used to visit this blog daily youtube to high quality mp3

ReplyDeleteI am very enjoyed for this blog. Its an informative topic. It help me very much to solve some problems. Its opportunity are so fantastic and working style so speedy. convert psd to wordpress theme

ReplyDeleteDo your friends love riding electric moped scooter at work? It is not just a means of transportation. Which thebest electric moped scooter is a very interesting game for adults and children. Very small the best electric moped can be stored inside the trunk of a car.

ReplyDeleteI hope you can find electric scooters at EverythingElectric. And I hope you can find your favorite products on EverythingElectric.net.

ReplyDeleteThe article is very good, thanks to this blog owner for sharing. I am not very knowledgeable about bloggers' concepts. But I think it's useful for many people. I am very concerned about the types of vehicles that operate on electric power. Especially electric scooter for adults.

ReplyDeleteAmazing! The blog owner's article is very interesting and interesting. It brings a lot of experience and knowledge to me. Thank you for sharing this article. I also often share my knowledge on how to ride a one wheel scooter around.

ReplyDeleteActually I read it yesterday but I had some ideas about it and today I wanted to read it again because it is so well written.

ReplyDeleteData Analytics Courses in Bangalore

I am more curious to take an interest in some of them. I hope you will provide more information on these topics in your next articles.

ReplyDeleteBusiness Analytics Course

You definitely put a fresһ spin on a subject that hɑs been wrіtten about for years.

ReplyDeleteWonderfսl ѕtuff, just wonderful! 카지노사이트

Loved this blog. Such blogs should be made more often. Huge kudos to u 토토

ReplyDelete바카라사이트 I would like to say “thank you” for your commitment of time and effort. You have made such a valuable impact in every aspect of our web presence

ReplyDelete스포츠토토 Thanks for sharing this information. I really like your blog post very much. You have really shared a informative and interesting blog post with people.

ReplyDeleteQuality articles is the secret to attract

ReplyDeletethe visitors to go to see the site, 먹튀검증

that’s what this website is providing.

This comment has been removed by the author.

ReplyDeleteVery informative post.keep sharing such type of post..

ReplyDeleteMachine learning classes in Pune

Our team is motivated and dedicated to providing you a seamless experience when it comes. 메이저놀이터

ReplyDeleteI see the best substance on your blog and I incredibly love understanding them 토토사이트

ReplyDeleteOn that website page, you'll see your description, why not read through this 먹튀폴리스

ReplyDeletethis is really good website, coolest I have ever visit thank you so much, i will follow and stay tuned much appriciated 안전놀이터

ReplyDeleteWhen your website or blog goes live for the first time, it is exciting. That is until you realize no one but you and your 우리카지노

ReplyDeleteI like review sites which grasp the cost of conveying the fantastic helpful asset for nothing out of pocket. I genuinely revered perusing your posting. Much obliged to you 슈어맨

ReplyDeleteA superbly written article, if only all bloggers offered the same content as you, the internet would be a far better place 먹튀검증

ReplyDeleteThis post is good enough to make somebody understand this amazing thing, and I'm sure everyone will appreciate this interesting things 토토사이트

ReplyDeletethanks for this usefull article, waiting for this article like this again. 우리카지노

ReplyDeleteI was taking a gander at some of your posts on this site and I consider this site is truly informational! Keep setting up 토토사이트

ReplyDeleteHey, what a dazzling post I have actually come throughout and also believed me I have actually been browsing out for this similar type of blog post for past a week and also rarely encountered this. Thanks quite and I will seek even more posts from you 먹튀검증

ReplyDeleteIt is a really very nice blog It is a really very nice blog 먹튀폴리스꽁머니

ReplyDeleteGreat post, and great website. Thanks for the information! 메이저안전놀이터

ReplyDeleteThis is a wonderful article, Given so much info in it, These type of articles keeps the users interest in the website, and keep on sharing more ... good luck. 먹튀검증

ReplyDeleteThank you for your post, I look for such article along time, today and find it finally. this post give me lots of advise it is very useful for me 사설토토

ReplyDeleteThank you again for all the knowledge you distribute,Good post. I was very interested in the article, it's quite inspiring I should admit. I like visiting you site since I always come across interesting articles like this one.Great Job, I greatly appreciate that.Do Keep sharing! Regards 토토사이트

ReplyDeleteThank you for taking the time to share with us 먹튀검증

ReplyDeleteI feel very grateful that I read this. It is very helpful and very informative and I really learned a lot from it 먹튀검증

ReplyDeleteAwesome dispatch! I am indeed getting apt to over this info, is truly neighborly my buddy. Likewise fantastic blog here among many of the costly info you acquire. Reserve up the beneficial process you are doing here 슬롯사이트

ReplyDelete"I used to be able to find good info from your articles.

ReplyDelete" 먹튀검증

Would like to see some other posts on the same subject 안전놀이터

ReplyDeleteThis is an awesome blog.. 먹튀검증

ReplyDeleteThank you again for all the knowledge you distribute,Good post. I was very interested in the article, it's quite inspiring I should admit. I like visiting you site since I always come across interesting articles like this one.Great Job, I greatly appreciate that.Do Keep sharing! Regards 비아그라 구입

ReplyDeleteIts like you read my mind! You appear to know so much about this, like you wrote the book in it or something. I think that you could do with some pics to drive the message home a little bit, but other than that, this is magnificent blog. A great read. I’ll certainly be back. You write a very good blog. Thanks for providing such valuable information on your blog. I am the one who writes on a topic similar to yours. I hope you come to my blog and take a look at the posts I’ve been writing. 사설토토

ReplyDeleteI'll take a night in any of these or perhaps a night in all of these!! The one in Spain is amazing - 토토사이트

ReplyDeleteI’m going to read this. I’ll be sure to come back. thanks for sharing. and also This article gives the light in which we can observe the reality. this is very nice one and gives indepth information. thanks for this nice article. 홀덤사이트

ReplyDeletewas surfing the Internet for information and came across your blog. I am impressed by the information you have on this blog. It shows how well you understand this subject. 토토

ReplyDelete"These are truly fantastic ideas in concerning blogging.

ReplyDeleteYou have touched some nice points here. Any way keep up wrinting." 먹튀폴리스

Thank you for the sensible critique. Me and my neighbor were just preparing to do some research about this. We got a grab a book from our area library but I think I learned more from this post. I’m very glad to see such magnificent information being shared freely out there.. I do believe this is an excellent blog. I stumbled upon it on Yahoo , i will come back once again. Money and freedom is the best way to change, may you be rich and help other people. 메이저사이트 추천

ReplyDelete"These are actually great ideas in concerning blogging.

ReplyDeleteYou have touched some fastidious things here. Any way keep up wrinting." 온라인홀덤

In yoast seo be able unite the keyword you'd kind of thine submit yet web page in conformity with office because of into the iquire results. 토토사이트

ReplyDelete"Hello, just wanted to tell you, I loved this blog post. It was helpful.

ReplyDeleteKeep on posting!" 에볼루션카지노

We absolutely love your blog and find a lot of your post’s to be precisely what I’m looking for. 먹튀검증

ReplyDeletefastidious piece of writing and pleasant urging commented here, I am truly enjoying by these. 카지노사이트

ReplyDeleteExcellent post! We are linking to this particularly great content on our website. Keep up the good writing. 카지노사이트

ReplyDeletePretty good post. I have just stumbled upon your blog and enjoyed reading your blog posts very much. I am looking for new posts to get more precious info. Big thanks for the useful info 카지노사이트

ReplyDeleteWow, this piece of writing is pleasant, my younger sister is analyzing these things,

ReplyDeletethus I am going to inform her.풀싸롱

Hi there, after reading this remarkable paragraph i am

too happy to share my experience here with colleagues.

The information you are providing that is really good. Thank for making and spending your precious time for this useful information. Thanks again and keep it up. 파칭코

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteI really enjoy your web’s topic. 텍사스홀덤사이트 Very creative and friendly for users. Definitely bookmark this and follow it everyday.

ReplyDeleteMy brother suggested I might like this website.He was totally right. This post truly made my day.

ReplyDelete야동

You can't imagine simply how much time I had spent for this info! Thanks 오피헌터!

ReplyDeleteI just could not depart your site prior to suggesting that I really enjoyed the standard info a person provide for your visitors? Is going to be back often to check up on new posts 타이마사지

ReplyDeleteAfter looking into a few of the blog posts on your site 안마, I seriously appreciate your way of blogging.

ReplyDeleteTWO DEAD IN SHOOTING AT HOUSTON’S DOWNTOWN AQUARIUM! yes i checked this case i saved this file in my outlook and suddenly it got stuck and then found this how to refresh outlook and fixed my issue thanks alot if any one want to study then ib me.

ReplyDeleteVery useful post. I found so many interesting stuff in your blog. Truly, its great article. Will look forward to read more articles...

ReplyDeleteData Science Training in Hyderabad

Hi, after reading this remarkable piece of writing i am as well happy to share my experience here with friends. This is a very interesting article. Please, share more like this! 룰렛

ReplyDeleteI ought to declare scarcely that its astonishing! The blog is instructive additionally dependably manufacture astonishing entities digital marketing platforms

ReplyDeleteSuperbly written article, if only all bloggers offered the same content as you, the internet would be a far better place. zopiclone

ReplyDeleteThis is a very nice one and gives in-depth information. I am really happy with the quality and presentation of the article. I’d really like to appreciate the efforts you get with writing this post. Thanks for sharing.

ReplyDeleteCCNA classes in Chennai

I admire this article for the well-researched content and excellent wording. I got so involved in this material that I couldn’t stop reading. I am impressed with your work and skill. Thank you so much. 토토커뮤니티

ReplyDeleteI like to recommend exclusively fine plus efficient information and facts, hence notice it: Mlb중계 NBA중계

ReplyDeleteThanks for sharing your thoughts on wordpress grid theme. 토토

ReplyDeletewhat i do no longer recognize is if truth be instructed the way you’re now not without a doubt plenty greater smartly-appreciated than you'll be right now. You're very smart. You realise consequently substantially relating to this remember, made me for my part consider it from such a lot of diverse angles. Its like women and men aren’t involved till it's far some thing to do with woman gaga! Your non-public stuffs remarkable. Usually keep it up! Nicely i in reality favored analyzing it. This subject supplied by using you could be very practical for proper planning. What’s up, i read your weblog daily. Your writing fashion is 먹튀검증

ReplyDeletehiya, i assume your web page is probably having web browser compatibility problems. When i test your weblog in safari, it seems great but while establishing in net explorer, it has some overlapping troubles. I merely wanted to present you a short heads up! Apart from that, remarkable website! Hi, i do think this is a fantastic web website. I stumbledupon it �� i may revisit yet again on the grounds that i have stored as a favourite it. Money and freedom is the finest way to change, may additionally you be rich and keep to manual other people. I’m extra than satisfied to find out this website. I want to to thank you on your time for this specially extremely good read!! I truely preferred each little bit of it and that i have you e book-marked to look at new stuff on your website. 먹튀검증

ReplyDeleteYes i am totally agreed with this article and i just want say that this article is very nice and very informative article.I will make sure to be reading your blog more. You made a good point but I can't help but wonder, what about the other side? !!!!!!THANKS!!!!!! Thank you for sharing this article. When I was pregnant, Turmeric helped me get rid of unwanted gas that made me bloated and have an ailing back. Thanks for taking the time to discuss this, I feel strongly Updated Resurge Pills about it and love learning more on this topic. If possible, as you gain expertise, would you mind updating your blog with extra information? It is extremely helpful for me. Thanks for sharing the post.. parents are worlds best person in each lives of individual..they need or must succeed to sustain needs of the family. 먹튀사이트리스트

ReplyDeleteI discovered your site ideal for me. It consists of wonderful and useful posts. I've read many of them and also got so much from them. In my experience, you do the truly amazing.Truly i'm impressed out of this publish . Positive site, where did u come up with the information on this posting? I'm pleased I discovered it though, ill be checking back soon to find out what additional posts you include. I simply want to tell you that I am new to weblog and definitely liked this blog site. Very likely I’m going to bookmark your blog . You absolutely have wonderful stories. Cheers for sharing with us your blog. Great post i must say and thanks for the information. Education is definitely a sticky subject. However, is still among the leading topics of our time. I appreciate your post and look forward to more. 토토사이트추천

ReplyDeletei needed to create you a touch statement so as to mention thank you a lot again referring to the terrific recommendations you’ve discussed right here. It's far certainly unbelievably open-surpassed of you to supply brazenly simply what some of us should probably have furnished for an ebook to grow to be making a few cash on their personal, most significantly when you consider that you may have tried it in case you decided. Those principles further served to provide a excellent manner to recognize that some humans have the equal ardour much like my own to know the fact a exceptional deal more pertaining to this difficulty. I realize there are a few more fulfilling possibilities within the destiny for those who see your weblog. That could be a very good tip in particular to the ones sparkling to the blogosphere. Easy but very accurate info thanks for sharing this one. A must examine put up! 사설토토

ReplyDeleteyou in reality make it appearance so everyday with your presentation besides i find this have an effect to be genuinely some thing which i determine i'd never 메이저사설토토

ReplyDelete"Endeavoring to say thank you won't simply be adequate, for the stunning lucidity in your article. I will truly get your RSS to remain educated regarding any updates. Wonderful work and much accomplishment in your business attempts .

ReplyDeletePut away me exertion to examine all of the comments, anyway I genuinely thoroughly enjoyed the article. It wind up being Very helpful to me and I am sure to every one of the investigators here! It's reliably respectable when you can not only be instructed, yet furthermore locked in!" 스포츠토토

Really decent post. I just discovered your weblog and needed to say that I have truly delighted in searching your blog entries. After all I'll be subscribing to your food and I trust you compose again soon! This article was written by a real thinking writer. I agree many of the with the solid points made by the writer. I’ll be back. Thank you for helping people get the information they need. Great stuff as usual. Keep up the great work!!! Positive site, where did u come up with the information on this posting?I have read a few of the articles on your website now, and I really like your style. Thanks a million and please keep up the effective work. Thanks for such a great post and the review, I am totally impressed! Keep stuff like this coming 우리카지노

ReplyDeletei was reading a number of your content material on this website and i conceive this internet web site is absolutely informative ! Maintain on putting up . Thank you for sharing this information to growth our understanding. Searching ahead for extra to your site. Right here is such an splendid take a look at this out now you can get this with unique cut price and with loose shipping as nicely. This is a truely properly website online post. No longer too many human beings could simply, the manner you simply did. I am definitely inspired that there's so much facts approximately this issue that have been exposed and also you’ve achieved your first-class, with so much class. If desired to recognise greater approximately green smoke opinions, than by means of all manner are available in and test our stuff. 토토사이트

ReplyDeleteI wanted to thank you for this excellent read!! I definitely loved every little bit of it. I have you bookmarked your site to check out the new stuff you post. 토토

ReplyDelete"I am sure this post has touched all the internet viewers, its really really nice article

ReplyDeleteon building up new website." 먹튀검증사이트

"Hello, just wanted to tell you, I loved this blog post. It was helpful.

ReplyDeleteKeep on posting!" 릴게임

Hello there! I simply want to offer you a big thumbs up for your great info you have right here on this post.

ReplyDeleteI’ll be coming back to your web site for more soon.

sportstoto.link

I simply could not depart your site before suggesting that I really loved the usual information an individual supply in your visitors? Is gonna be again frequently to inspect new posts. 온라인카지노커뮤니티

ReplyDelete"Pretty! This has been a really wonderful article.

ReplyDeleteMany thanks for supplying this info." 안전놀이터

I’m not that much of a internet reader to be honest but your blogs really nice, keep it up! I’ll go ahead and bookmark your site to come back in the future. Many thanks|

ReplyDeleteFeel free to surf to my page - 카지노사이트

Actually when someone doesn't understand then its up to other viewers that they will assist, so here it takes place.

ReplyDeleteHere is my web site - 카지노사이트

"This is very interesting, You’re a very skilled blogger.

ReplyDeleteI have joined your rss feed and look forward to seeking more of

your wonderful post. Also, I’ve shared your site in my

social networks!" 토토

It's really a nice and useful piece of info. I'm satisfied that you simply shared this useful info with

ReplyDeleteus. Please keep us up to date like this. Thanks for sharing. cab토토사이트

I got too much interesting stuff on your blog. I guess I am not the only one having all the enjoyment here! Keep up the good work. 에볼루션카지노

ReplyDeletethanks for sharing quality data with us. I love your submit and all you proportion with us is uptodate and quite informative, i would love to bookmark the web page so i can come right here once more to examine you, as you have got finished a splendid process. Your weblog is terrifi, superior provide appropriate consequences... Seen a huge quantity of actually will recognize each person even inside the event they do no longer take the time to show. I felt very glad whilst analyzing this web site. This changed into genuinely very informative website online for me. I absolutely preferred it. This changed into certainly a cordial put up. Thank you lots!. Thanks for a totally interesting weblog. What else may also i am getting that type of information written in this kind of perfect method? I’ve a mission that i'm sincerely now working on, and i've been on the look out for such info. Yes i'm definitely agreed with this newsletter and i simply want say that this article is very great and very informative article. I will make sure to be reading your blog extra. You made a very good factor however i can't help however marvel, what approximately the alternative side? !!!!!! Thanks that is truely a nice and informative. Thank you once more for all of the know-how you distribute,suitable put up. I was very interested by the object, it is pretty inspiring i ought to admit. I like travelling you site since i usually come upon thrilling articles like this one. Notable process, i significantly admire that. Do keep sharing! Regards attractive factor of content. I simply stumbled upon your web page and in accession capital to say that i accumulate certainly enjoyed account your blog posts. Besides i could be subscribing in your feeds or even i achievement you get admission to consistently quickly. Hey there. I found your blog the usage of msn. This is a definitely well written article. I can ensure to bookmark it and return to read more of your useful records. Thank you for the submit. I can really comeback. There. I discovered your weblog the use of msn. This is an incredibly well written article. I will make certain to bookmark it and come lower back to study greater of your useful facts. Thanks for the post. I’ll in reality return. flexible running environment 먹튀검증

ReplyDeletewow, what an exceptional put up. I discovered this too much informatics. It's far what i used to be in search of for. I would like to advocate you that please preserve sharing such form of information. If possible, thanks. splendid article! I need people to understand just how precise this facts is on your article. It’s exciting, compelling content. Your perspectives are similar to my very own concerning this issue.“thanks, notable publish. I truly like your factor of view! Thanks for posting 사설토토

ReplyDeletegreetings! That is my 1st comment right here so i simply wanted to give a brief shout out and let you know i truely revel in analyzing thru your posts. Are you able to propose any other blogs/websites/forums that pass over the equal topics? Thanks to your time! I like your writing so much! Percentage we keep up a correspondence extra about your publish on aol? I require an expert in this residence to unravel my trouble. Can be that’s you! Having a look forward to appearance you. Thank you for every other astounding article. In which else should all people get that sort of data in this sort of perfect means of writing? I have a presentation next week, and i am at the search for such facts. 먹튀검증

ReplyDeletevery useful statistics shared in this article, nicely written! I could be reading your articles and the usage of the informative tips. Searching forward to read such knowledgeable articles. I am incapable of reading articles on-line very often, however i’m glad i did these days. It's miles very well written, and your points are properly-expressed. I request you warmly, please, don’t ever stop writing very useful records shared in this text, properly written! I might be reading your articles and using the informative recommendations. Searching forward to examine such informed articles 먹튀검증

ReplyDeleteattractive section of content. I simply stumbled upon your website and in accession capital to assert that i accumulate in truth enjoyed account your weblog posts. Anyway i may be subscribing for your feeds or even i fulfillment you get right of entry to consistently swiftly. Thanks, i have currently been searching for information approximately this challenge for a while and yours is the greatest i have observed till now. But, what regarding the realization? Are you wonderful concerning the supply? 안전놀이터

ReplyDelete"i sense a lot more human beings want to read this, very good information. Very useful facts shared in this text, well written! I may be analyzing your articles and the usage of the informative tips. Looking ahead to read such informed articles. I have amusing with, bring about i discovered just what i was taking a look for. You've got ended my four day lengthy hunt! God bless you man. Have a pleasing day. This blog seems exactly like my antique one! It’s on a totally different issue however it has pretty plenty the

ReplyDeletesame layout and layout. First rate choice of colors!" 우리카지노

"just announcing thanks will no longer just be enough, for the fantasti c lucidity on your writing. I can immediately clutch your rss feed to stay informed of any updates. After studying your article i was amazed. I understand which you give an explanation for it thoroughly. And i desire that different readers can even revel in how i experience after studying your article. I’m now not positive in which you are becoming your data,

ReplyDeletebut accurate topic. I needs to spend some time getting to know extra

or expertise extra. Thank you for marvelous information i used to be searching

for this data for my assignment." 피망머니상

i'm generally to blogging and that i clearly appreciate your content often. This content has truely peaks my interest. I can bookmark your web web page and preserve checking conceivable information. Excellent put up. I examine some thing tougher on awesome blogs everyday. Maximum usually it's far stimulating to peer content off their writers and use a little there. I’d want to apply a few with all the content material on my blog whether you don’t thoughts. Natually i’ll offer you a link on the web blog. Many thank you sharing . I in reality did not realize that. Learnt some thing new these days! Thank you for that. There some interesting points over time here but i don’t understand if i see all of them center to coronary heart. There exists some validity however permit me take maintain opinion till i check out it further. Excellent post , thanks and now we need greater! Covered with feedburner on the identical time -----i’m curious to discover what blog platform you are the usage of? I’m experiencing a few minor safety issues with my modern day website online and i’d want to locate some thing extra comfortable. Do you have any solutions? I’d have were given to speak to you here. Which isn’t a few aspect i do! I revel in analyzing an article that have to get humans to assume. Also, thank you for allowing me to remark! This sort of message constantly inspiring and i opt to read high-quality content material, so glad to discover proper area to many here within the submit, the writing is simply superb, thank you for the submit. Genuinely like your internet site however you need to check the spelling on quite a few of your posts. A number of them are rife with spelling problems and that i in finding it very bothersome to inform the reality then again i’ll clearly come again once more. Beneficial facts on topics that plenty are involved on for this wonderful submit. Admiring the effort and time you positioned into your b!.. You have got a very excellent format for your blog. I want it to apply on my web page too ,what i don’t understood is in fact how you’re no longer definitely lots more smartly-liked than you may be proper now. You are very smart. You recognise consequently appreciably in terms of this count, produced me for my part believe it from severa various angles. Its like women and men don’t seem to be fascinated till it is something to do with girl gaga! Your person stuffs great. At all times address it up! This was novel. I wish i may want to read each post, however i ought to move back to paintings now… however i’ll return. Very first-rate publish, i simply love this internet site, preserve on it 우리카지노

ReplyDeleteYou are terrific. You're truly like an angel that composed this remarkable things as well as composed it to your viewers. Your blog site is excellent, consisting of web content design. This ability resembles an expert. Can you inform me your abilities, as well? I'm so interested.바카라사이트

ReplyDeleteKeep up the fantastic piece of work, I read few articles on this site and I think that your site is real interesting and contains bands of superb info. 온라인카지노

ReplyDelete

ReplyDeleteHi, Neat post. There's a problem together with your site in web explorer, would check this?

IE still is the market chief and a good part of other people will miss your excellent writing

due to this problem.

Look at my page :: 오피월드

ReplyDeleteIt's like you've got the point right, but forgot to include your readers. Maybe you should think about it from different angles.

Business Analytics Course

After reading your article I was amazed. I know that you explain it very well. And I hope that other readers will also experience how I feel after reading 스포츠토토사이트

ReplyDeletea blog like yours would cost a pretty penny? 안전사이트

ReplyDeleteI’m not very internet smart so I’m not 100% sure. Any tips or advice would be greatly appreciated.

Wohh exactly what I was searching for this weblog. Lastly something not a junk, appreciate it for posting. 안전메이저사이트

ReplyDeleteThe Design looks very good I look forward to your kind cooperation. 스포츠토토

ReplyDeleteI can't believe there's a post like this 먹튀검증 Wow, great blog article

ReplyDeleteWe are linking to this great post on our website we present the verification criteria 메이저안전사이트

ReplyDeleteWe are linking to this great post on our website This blog is a very informative place. I'll come by often 먹튀검증

ReplyDeleteGreat delivery. Outstanding arguments. Keep up the amazing effort.

ReplyDelete토토

온라인경마

경마사이트

I truly love your site.. Great colors & theme.

ReplyDeleteDid you make this amazing site yourself? Please reply

back as I’m hoping to create my own personal website and would love

to know where you got this from or just what the theme is called.

Many thanks!

카지노사이트

토토

This is a great inspiring article.I am pretty much pleased with your good work.You put really very helpful information. Keep it up. Keep blogging. Looking to reading your next post. <a href="https://www.sportstoto.zone" target=

ReplyDeleteExcellent site with great content and very informative. I would like to thank you for the efforts you have made in writing.목포출장안마

ReplyDeletethat is a incredible and inspirational video, and we are able to improve our characters after watching this video. So, we have to do the excellent for our lives and pass ahead in the direction of enhancements because through this we will spend a good life. Such a completely beneficial article. Very interesting to examine this newsletter. I would love to thanks for the efforts you had made for writing this extremely good article. Thank you for writing a exquisite blog. In this website, i continually see nice relying articles. I additionally observe you. I need to be the first-rate blogger such as you—whenever i really like to study your writing stuff due to the fact i get very useful content there. You do wonderful paintings.

ReplyDelete일본경마

magosucowep

tremendous web site, wherein did u provide you with the data in this posting? I am thrilled i discovered it although, sick be checking returned soon to discover what additional posts you consist of . Cool you write, the facts is excellent and thrilling, i will give you a link to my website. Wonderful writing. This write-up has effects on many vital issues your cutting-edge society. Maximum of us can't be uninvolved to help maximum of those problems. That write-up allows exact ideas in addition to techniques. Exceedingly useful in addition to realistic. Notable dispatch! I'm certainly getting apt to over this info, is truely neighborly my friend. Likewise amazing blog right here among most of the high-priced info you purchased. Reserve up the useful manner you're doing right here. I wanted to thank you for this exquisite study!! I genuinely taking part in every little little bit of it i have you bookmarked to test out new stuff you post. That is in reality an practical and satisfactory information for all. Thanks for sharing this to us and extra power 토토사이트

ReplyDeleteterrific submit i have to say and thanks for the statistics. Training is simply a sticky difficulty. But, remains many of the leading subjects of our time. I appreciate your put up and stay up for greater. I just determined this blog and feature excessive hopes for it to keep. Keep up the exceptional paintings, its hard to locate proper ones. I have brought to my favorites. Thanks. Its a high-quality pleasure analyzing your put up. Its full of statistics i'm seeking out and i really like to publish a remark that "the content material of your publish is extraordinary" first rate paintings. First-rate publish! That is a totally fine weblog that i can definitively come again to greater instances this 12 months! Thank you for informative put up. 헤이먹튀

ReplyDeleteamazing information sharing .. I am very happy to read this text .. Thanks for giving us undergo information. Remarkable fine. I recognize this submit. I excessive respect this put up. It’s tough to discover the coolest from the horrific once in a while, however i assume you’ve nailed it! Could you mind updating your weblog with extra information? This post might be in which i were given the most useful facts for my studies. It appears to me they all are genuinely tremendous. Thank you for sharing. Notable! It sounds exact. Thank you for sharing.. 먹튀검증백과

ReplyDeletea lot of thanks for all of your paintings on this website. Betty genuinely loves placing apart time for internet studies and it is simple to grasp why. The general public study all regarding the dynamic way you convey profitable guidelines at the website and as properly encourage participation from traffic about this factor plus my princess is absolutely being taught a amazing deal. Experience the relaxation of the brand new year. You've got been undertaking a splendid task. task 토토디펜드

ReplyDeleteKeep posting such compelling post, for your visitors we are just in love with your content. 토토사이트

ReplyDeleteIn contrast, if developers instead use Javascript, the web pages load quickly! Why is this happening? Is the code to blame? Does Windows 10 simply have a problem? Continue reading to learn how to fix Windows 0x0 0x0 errors! 0x0 0x0 The 0x0 0x0 error has recently caused issues for web developers using Golang HTML code on Windows 10. Users can’t open their browsers due to the error.

ReplyDeleteCredit cards, MasterCards, American Expresses, Discovers, and PayPal are accepted. Since this site is operated by The Home Depot’s third-party partner, CashStar, neither The Home Depot more details Consumer Credit Card nor The Home Depot Commercial Revolving Charge Card can be used to purchase Gift. To Catch a Predator ended up being canceled in 2008. Entrapment is a crime where a law enforcement official check it out provokes someone to commit a crime they would not otherwise have committed. The show had been accused of Entrapment for years. Handshakes (often by locking thumbs), fist bumps, hugging, or pounding the fist are all common ways to express dap. In the more info Vietnam War, black soldiers utilized the practice and termed it Black Power, and the term has been used since 1969. If you purchased Gap Factory return or Banana Republic Factory items online, you can return them to check their respective Factory stores. You can return items purchased from GapFactory.com to a Gap Factory store, but not to Banana Republic Factory or Gap Specialty stores. A big screen, a brand new projector, and an updated sound system make up Regal’s RPX, its own large format rpx experience. I’m guessing you’re familiar with that list. There are several additional advantages of the RPX over a regular movie theater. The picture quality isn’t as good as Dolby or IMAX. With over 300 different breeds of Lancaster Puppies Reviews to choose from, Greenfield Puppies has an office in more than 30 different states. Dogs that go now are not available locally can be purchased from the company.

ReplyDeletethe company. Customers say Greenfield Puppies’ representatives are professional and helpful, and the company has strict guidelines to ensure it partners only with reputable breeders. Is Wholesale 21 legit: Among 263 reviews for Wholesale7, 3.88 stars indicate customers are CLICK HERE generally happy with their purchases. Customers are most likely to mention the quality and good customer service when expressing their satisfaction with Wholesale7. Among Wholesale Clothing sites, Wholesale7 ranks 10th. irk stands for: The Urk. FILTERING. An irk causes annoyance. To irk is when a mosquito irk buzzes in your ear in an irritating manner. For someone’s personality to irk you is an example of how to irk them. However, as on any other resource website, Anilinkz’s domain name is changing to Aniwatcher as part of its check more ever-evolving status. You can still use the website at aniwatcher.com, but you will now need to navigate to it. Here is a list of the top Minecraft mods 1.14.4, most popular by players. These mods were approved by them to be the best. click Minecraft 1.14.4 mods list is a must-use for anyone who wants to play Minecraft well. In contrast, if developers instead use Javascript, the web pages load quickly! Why is this happening? Is the code to blame? 0x0 0x0 error code Does Windows 10 simply have a problem? Continue reading to learn how to fix Windows 0x0 0x0 errors! The term “delivered to an agent for final delivery” means someone on your behalf final delivery has received it. A relative or a friend could have received it. You can contact USPS if you don’t receive your parcel. Sometimes kitsune is translated as fox spirit, but it actually refers to a broader class of folkloric creatures. Inari’s benevolent celestial kitsune foxes, or zenko (善狐, literally ‘good foxes’) are sometimes referred to as the Inari foxes in English. What does tw mean? The term “total weight” is used to describe the total weight of all diamonds in tw mean a piece of jewelry. The carat weight of one diamond and the total carat weight of a diamond ring are very different, causing some confusion for first-time buyers.

ReplyDeleteI do not know what to say really what you share very well and useful to the community, I feel that it makes our community much more developed, thanks 사설토토

ReplyDeleteSomething that is remarkable and should be learned. Thank you for providing this great concept. 사설토토

ReplyDeleteThere is certainly a great deal to learn about this topic. I love all the points you have made.

ReplyDelete사설토토

카지노사이트

파워볼

온라인카지노

Positive site, where did u come up with the information on this posting?I have read a few of the articles on your website now, and I really like your style. Thanks a million and please keep up the effective work. I definitely enjoying every little bit of it. It is a great website and nice share. I want to thank you. Good job! You guys do a great blog, and have some great contents. Keep up the good work. This is a great inspiring article.I am pretty much pleased with your good work.You put really very helpful information. Keep it up. Keep blogging. Looking to reading your next post. 토토마블

ReplyDeleteGood post and a nice summation of the problem. My only problem with the analysis is given that much of the population joined the chorus of deregulatory mythology, given vested interest is inclined toward perpetuation of the current system and given a lack of a popular cheerleader for your arguments, I’m not seeing much in the way of change. I would really love to guest post on your blog . Some really nice stuff on this web site , I love it. Im no expert, but I consider you just made the best point. You naturally know what youre speaking about, and I can really get behind that. Thanks for being so upfront and so sincere. 검증사이트

ReplyDeleteI am very enjoyed for this blog. Its an informative topic. It help me very much to solve some problems. Its opportunity are so fantastic and working style so speedy. The sims : Discover university is now available worldwide on PC! Your dorm room is ready for you, dom't be late for your first day of class 안전놀이터

ReplyDeleteA very Wonderful blog. We believe that you have a busy, active lifestyle and also understand you need marijuana products from time to time. We’re ICO sponsored and in collaboration with doctors, deliveries, storefronts and deals worldwide, we offer a delivery service – rated #1 in the cannabis industry – to bring weed strains straight to you, discretely by our men on ground. 토토사이트

ReplyDeleteGood post and a nice summation of the problem. My only problem with the analysis is given that much of the population joined the chorus of deregulatory mythology, given vested interest is inclined toward perpetuation of the current system and given a lack of a popular cheerleader for your arguments, I’m not seeing much in the way of change. I would really love to guest post on your blog . Some really nice stuff on this web site , I love it. Im no expert, but I consider you just made the best point. You naturally know what youre speaking about, and I can really get behind that. Thanks for being so upfront and so sincere. 먹튀검증

ReplyDeleteGreat job for publishing such a beneficial web site. Your web log isn’t only useful but it is additionally really creative too. There tend to be not many people who can certainly write not so simple posts that artistically. Continue the nice writing . Very informative article. For my web site to generate relevant traffic which key word should i choose, means how to judge any key words effectiveness can you suggest me? my web site is about consultancy for business. thanks . Some truly wonderful work on behalf of the owner of this internet site , perfectly great articles . 먹튀연구실

ReplyDeleteI just couldn't leave your website before telling you that I truly enjoyed the top quality info you present to your visitors? Will be back again frequently to check up on new posts. A debt of gratitude is in order for giving late reports with respect to the worry, I anticipate read more. Great post i must say and thanks for the information. I appreciate your post and look forward to more. Thanks for the writeup. I definitely agree with what you are saying. I have been talking about this subject a lot lately with my brother so hopefully this will get him to see my point of view. Fingers crossed! 온카맨

ReplyDeleteI just couldn't leave your website before telling you that I truly enjoyed the top quality info you present to your visitors? Will be back again frequently to check up on new posts. A debt of gratitude is in order for giving late reports with respect to the worry, I anticipate read more. Great post i must say and thanks for the information. I appreciate your post and look forward to more. Thanks for the writeup. I definitely agree with what you are saying. I have been talking about this subject a lot lately with my brother so hopefully this will get him to see my point of view. Fingers crossed! 안전놀이터

ReplyDeleteThough It is not relevant to me but it is quite informative and many of my connections relate to it. I know how it works. You're doing a good job, keep up the good work. Thanks for sharing this best stuff with us! Keep sharing! I am new in the blog writing.All types blogs and posts are not helpful for the readers.Here the author is giving good thoughts and suggestions to each and every readers through this article . Very valuable information, it is not at all blogs that we find this, congratulations I was looking for something like that and found it here. 카지노

ReplyDeleteIt's like you've got the point right, but forgot to include your readers. Maybe you should think about it from different angles.

ReplyDeleteData Science Training in Nashik

Helpful info. Lucky me I discovered your website unintentionally, and I'm stunned why this accident did not happened earlier! I bookmarked it. 토토

ReplyDeleteI am very impressed with your post because this post is very beneficial for me and provide a new knowledge…

ReplyDelete카지노

스포츠토토

카지노사이트

Thanks for the exceptional proportion. Your article has proved your hard work and experience you've got were given on this discipline. First rate . I like it studying. I’m certain i'm able to at closing make a move the use of your guidelines on those things i ought to by no means had been capable of touch by myself. You had been so modern to let me be one of these to gain out of your useful statistics. Please understand how a lot i'm thankful. In reality respectable submit. I simply located your blog and wished to mention that i have certainly cherished surfing around your weblog entries. Regardless i'll be subscribing for your nourish and i accept as true with you compose afresh soon! I'm in reality taking part in reading your properly written articles. It seems like you spend a whole lot of time and effort in your weblog. I've bookmarked it and i'm searching forward to studying new articles. That is extraordinarily exciting substance! I've absolutely favored perusing your focuses and feature arrived at the realization which you are ideal approximately a widespread lot of them. You're excellent. I am thrilled and lucky to return on in your web page, i truly preferred the top notch article in your web page. Thanks for this useful data. I additionally determined very thrilling statistics 먹튀신고

ReplyDeleteyou're so interesting! I do now not take into account i have study a few aspect like that before. So great to find out someone with a few unique thoughts in this topic. Significantly.. Thank you for beginning this up. This web internet website online is some element that is required on the internet, a person with some originality! I truly idea it is able to be an idea to publish incase without a doubt every body else became having troubles studying but i'm a little unsure if i am allowed to position names and addresses on right right here . Genuine to end up touring your blog once more, it has been months for me. Properly this newsletter that i’ve been waited for so long. I'm able to want this put up to universal my task within the university, and it has actual equal problem be counted together along side your write-up. Thanks, pinnacle percentage. You have were given made some genuinely right elements there. I checked on the net to study extra approximately the problem and observed maximum individuals will go with your perspectives on this net website online. I’m going to look at this. I’ll make certain to return back lower lower back. Thanks for sharing. And additionally this newsletter gives the slight wherein we will observe the truth. This is very pleasant one and gives indepth information. Thank you for this first-class article.. The advent honestly fantastic. All of these miniature information are regular the use of massive quantity ancient past enjoy. I need all of it notably . Thanks for sharing this data. I in truth like your blog put up . You have got definitely shared a informative and interesting weblog publish . 카이소

ReplyDeleteSuperb blog! Do you have any suggestions for aspiring writers? I'm planning to start my own website soon but I'm a little lost on everything. Would you recommend starting with a free platform like Wordpress or go for a paid option? There are so many options out there that I'm totally confused .. Any suggestions? Bless you! I just wanted to give a quick shout out and say I truly enjoy reading through your articles. Can you recommend any other blogs/websites/forums that deal with the same topics? Many thanks! You completed several fine points there. I did a search on the theme and found nearly all folks will go along with with your blog. Spot on with this write-up, I truly believe that this website needs much more attention. I’ll probably be back again to read more, thanks for the information! 카지노헌터

ReplyDeleteWow, amazing blog format! How long have you been blogging for? you make blogging look easy. The total look of your site is wonderful, let alone the content material! เว็บ ufa

ReplyDeleteThis is such a great resource that you are providing and you give it away for free.

ReplyDeleteData Analytics Course in Durgapur

Thank you for any other informative site. The place else could I am getting that kind of information written in such a perfect approach? I have a mission that I'm just now running on, and I have been on the look out for such info 안전놀이터

ReplyDeleteYou're so interesting! I don’t think I've truly read through a single thing like this before. So wonderful to discover another person with genuine thoughts on this subject. Seriously. 야한동영상

ReplyDeletePlease visit once. I leave my blog address below

야설

야한동영상

ReplyDeleteVery useful article to read and Information was helpful.I would like to thank you for the efforts you had made for writing this awesome article.

Data Analytics Course

mungkin sudah tahu beberapa trik internet dan komputer yang membuat aktivitas harianmu lebih efektif dan efisien.Memang terdapat banyak sekali trik internet dan komputer yang sangat mempengaruhi aktivitas harian kita di luar sana, namun tentu tidak semuanya sudah kita ketahui.

ReplyDeletewisata komputer

It is great, yet take a gander at the data at this address. bitmain antminer s19j pro

ReplyDeleteHi there! This blog post could not be written much better! Going through this post reminds me of my previous roommate! He constantly kept talking about this. I’ll forward this information to him. Fairly certain he’ll have a good read. Many thanks for sharing! 안전토토사이트

ReplyDeleteIím amazed, I must say. Rarely do I encounter a blog thatís equally educative and entertaining, and let me tell you, you have hit the nail on the head. The problem is something which not enough people are speaking intelligently about. I am very happy I came across this in my search for something concerning this..The author is known by the naming of Thad and they totally digs that mention. Administering databases has been his normal work for a short time. I am really fond of climbing and I’ll be starting another thing along making use of. California is where he’s always been living. You can always find her website here: 먹튀검증사이트

ReplyDeleteThis surely helps me in my work. Lol, thanks for your comment! wink Glad you found it helpful..f you want to be successful in weight loss, you have to focus on more than just how you look. An approach that taps into how you feel, your overall health, and your mental health is often the most efficient. Because no two weight-loss journeys are alike, we asked a bunch of women who’ve accomplished a major weight loss exactly how they did it 메이저놀이터

ReplyDeleteExcellent points?I would notice that as someone who in reality doesn’t write on blogs a lot (actually, this can be my first publish), I don’t assume th,,I found so many interesting stuff in your blog especially its discussion. From the tons of comments on your articles, I guess I am not the only one having all the enjoyment here! keep up the good work. 먹튀검증

ReplyDeleteI really like it when individuals get together and share opinions. Great website, keep it up!..This site certainly has all of the information I needed about this subject and didnít know who to ask..I truly love this blog article.Thanks Again and again. Will read on 먹튀

ReplyDeleteI gotta favorite this website it seems very helpful ..You have a good point here!I totally agree with what you have said!!Thanks for sharing your views...hope more people will read this article!!..I admire this article for the well-researched content and excellent wording. I got so involved in this material that I couldn’t stop reading. I am impressed with your work and skill. Thank you so much. 메이저놀이터

ReplyDeleteI really discovered this a rich degree of data. The thing I was looking through for.I ought to recommend you that please continue sharing such sort of data..I should thank you for the undertakings you have made in making this post. I'm confiding in an equivalent best work from you later on also. I need to thank you for this site! Appreciative for sharing. Immense domains. 안전놀이터

ReplyDeleteI’m impressed, I have to admit. Actually rarely do you encounter a weblog that’s both educative and entertaining, and without a doubt, you could have hit the nail around the head. Your idea is outstanding; the pain is something there are not enough individuals are speaking intelligently about. I’m very happy that I came across this within my seek out something with this. | spirit filled churches near my location

ReplyDeleteAn excellent and motivating piece of writing. I'm essentially satisfied with your great work. You provided extremely useful information. Keep going. Continue to blog. Hoping to perusing your next post allpcworld

ReplyDeleteI appreciate your article. I now have a better understanding of it and value the detail. You have a great sense of style.Thanks for your article. I have understood more about it, and appreciate such detailed. keep sharing.

ReplyDelete¿Qué es un divorcio de mutuo acuerdo en Virginia?

This comment has been removed by the author.

ReplyDeleteThis comment has been removed by the author.

ReplyDeleteSQL Server Analysis Services (SSAS) is a powerful tool used for creating and managing analytical databases, often referred to as cubes, which allow users to perform complex data analysis and reporting. SSAS performance is crucial for delivering fast and reliable data analysis to users. As the size and complexity of your data grow, ensuring optimal SSAS performance becomes even more important.Abogado Tráfico Henrico VA

ReplyDeleteIm really impressed by your site

ReplyDeleteDinwiddie Conducción imprudente

I hope to have more articles of yours.

easy to analyise. Abogado Tráfico Hanover VA

ReplyDeleteIm really impressed by your site.Dinwiddie Conducción imprudenteI hope to have more articles of yours.

ReplyDeletethe point of the article is catchy. Traffic Lawyer Hanover VA

ReplyDeleteOptimizing MDX performance is essential for SSAS. Your post provides valuable insights into identifying and addressing performance bottlenecks. Looking forward to your next post for more tips.

ReplyDeleteNueva Jersey Orden Protección

Thank you so much for sharing thsi amazing article. best family court lawyers near me

ReplyDeleteI have read all the comments and suggestions posted by the visitors for this article are very fine, We will wait for your next article so only. Nice blog and absolutely outstanding.

ReplyDeleteviolating a protective order in virginia

this is nice , im sure your gettin a great response

ReplyDelete"An Introduction to SSAS Performance and SQL Sentry Performance Advisor for Analysis Services" is a valuable resource for those looking to optimize the performance of SQL Server Analysis Services (SSAS). This guide provides a clear and comprehensive overview of key performance metrics and best practices for tuning SSAS environments. The inclusion of SQL Sentry Performance Advisor tools offers practical insights into diagnosing and resolving performance issues, making it an essential tool for database administrators and analysts.

ReplyDeleteliving trust lawyer charlottesville va

will and trust lawyer charlottesville va

The "Introduction to SSAS Performance and Scalability" post is incredibly detailed and informative! It provides valuable insights into optimizing SSAS performance and understanding scalability. Want to know about clarke traffic lawyer click it.

ReplyDeleteFor individuals seeking to maximize the performance of SQL Server Analysis Services (SSAS), "An Introduction to SSAS Performance and SQL Sentry Performance Advisor for Analysis Services" is an invaluable reference. solicitation of a minor va. A thorough and understandable summary of important performance indicators and recommended procedures for fine-tuning SSAS environments is given in this handbook. An indispensable tool for database administrators and analysts, SQL Sentry Performance Advisor provides useful insights into identifying and fixing performance problems.

ReplyDelete